What’s a dependency?

A situation in which you need something or someone and are unable to continue normally without them. - Cambridge dictionary

The 3 following types of software dependencies are not universal in software engineering. However, I find them very useful, especially to reason about problems and trade-offs when designing micro-frontends.

- Code. Instructions that a computer can run. It can be distributed as text, binaries, etc. Example in JavaScript (JS):

import { WebSocketClient } from "@my-org/example"- Execution context. Resources that are allocated while running code. For instance, running

new WebSocketClient()could require a certain memory and network connection. In the following example,createApphas a dependency with an instance ofWebSocketClient. Example in JS:

createApp({ client: new WebSocketClient({ id: 123 }) })- Data. Information that is stored in some computer and can be read and possibly written. Example in JS:

let user = client.read("user")There are two interesting dimensions that affect dependencies, one is space and the other one is time. By space I mean the “physical thing” bits, for instance the X Kb of code we download, or the Y bits of data stored somewhere. By time I mean how these dependencies change over time and the effects those changes create. Let’s zoom in on each dependency.

Code

We wrote a validateEmail function using our favourite language, and we want to use that function in a program called validatorX written in the same language. Every programming language has some sort of mechanism to reuse code. Our validateEmail function became a dependency of validatorX. In modern JavaScript applications dependencies are typically imported at build time. By build time I mean the time when our code is “packaged” to be distributed.

Build time is different from runtime. Runtime is the time when our code runs. Here is the thing, in some languages we can import code at runtime and at build time, e.g. JavaScript dynamic imports. At runtime things are dynamic and so we can’t always predict how the system will behave ahead of time. Code imported at runtime is easier to change, but it’s less deterministic - we don’t even know if a given dependency will be available at all! In contrast, a build time output is static and so we can determine how it will work ahead of time. Build time makes things more rigid but safer.

In distributed architectures, each independent node in the system typically imports its code dependencies at build time. Then nodes may work in combination with other nodes at runtime in response to some event or request. For example, microservice X v1.0.0 and microservice Y v1.0.0 were built and deployed independently to the cloud. Then an event E triggered some code execution in both microservices X and Y. After that we noticed that there was a bug in microservice X, so we fixed it and deployed X v1.0.1.

Event E’ is dispatched again. It now produces the expected result. Notice that we didn’t build or deploy any code besides microservice X.

To deploy microservice X we need to release code to any computer that runs microservice X. Code can be deployed to all computers at the same time, or incrementally over time, e.g. canary deployment.

On the frontend, if we want to break up a system into distributed parts we can apply similar heuristics. Each micro-frontend would import most of its dependencies at build time. When a user navigates to a given page then micro-frontend X would work in combination with micro-frontend Y at runtime to produce the expected UI. If we find a bug in micro-frontend Y, we could fix it and deploy micro-frontend Y to each browser that is running it without having to build or deploy anything else.

Execution context

Imagine that we have a function connectWs() that creates a web socket connection to a remote computer. Let’s say that we call connectWs() from 2 different microservices that run on 2 different machines, and we also call it from 2 different micro-frontends that run on a single Web browser. Let’s increase both, microservices and micro-frontends, from 2 to 200. Do you see a problem?

Scalability is an issue for the browser because we can’t scale the computer that runs our code. In contrast to microservices where we could potentially provision more resources.

If we split a system into fully independent parts, each part should be able to scale independently. Microservices never share the execution context, this way they are more resilient, scalable, easier to change, and many other benefits. In the case of micro-frontends we have an important limitation, all the micro-frontends run in the same Web browser. This means that all the micro-frontends will run under the same execution context.

In the previous example, should we couple each micro-frontend by using the same web socket connection? This way it could scale. Wait, what if tomorrow a micro-frontend wants to use a different type of connection? What if micro-frontend X breaks a shared connection? OK, let’s create multiple connections since we probably won’t have more than 10 micro-frontends simultaneously in the same browser, and modern browsers can handle 10 connections. Wait, can mobile devices handle that? Do we pay per connection? Are we increasing the bill 10x?

The point I’m trying to make is, you have to make a trade-off based on your use case. In any case, the less execution context that you share in a micro-frontends architecture, the better.

Data

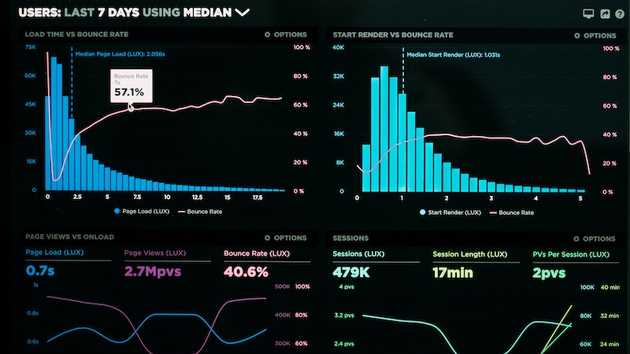

Consider an application with a distributed architecture and millions of users. It’s made of two microservices: user and post. Each depends on a shared data called username. Each microservice has its own database (DB) and a copy of each username in it. Both microservices are completely independent. If the user microservice is down, the post microservice can still return posts and include the username of the author.

We made a few trade-offs. We increased availability but we need to pay for and maintain more data storage. If the user microservice changes a username, it might take some time for the post microservice to sync its DB. We know that at some point the username will be consistent across the two DBs. We call this eventual consistency and it’s a model widely used in distributed systems to achieve high availability.

Is eventual consistency a bad user experience? If user X changes their name and right after user Y sees a post from user X, user Y might not see the latest username. This is not a terrible experience, because user Y might not know that user X’s username changed. What happens if user X changes their username and immediately looks at their own posts? In that case the UI might display two different usernames at the same time, which is a bad user experience.

When the UI changes the username and receives an OK from the backend it knows what the latest username is. In single-page applications or native apps, we can store and display the current username on the client after we update it to solve the eventual issue in this case.

If our single-page application is made of two different independent micro-frontends: post and user, should we replicate that username data in each micro-frontend just like we did in our microservices DBs? If we replicate that username in each micro-frontend we are possibly creating a consistency issue again. Should we instead share the same data across micro-frontends? The answer to these questions is another post in itself, so I’ll leave it here for now.

If you want to know more about the subject, don’t forget to subscribe to the newsletter or follow me on twitter. Please send me a tweet if you have any comments or feedback. Looking forward to discussing with you.